Your Team Stopped Talking About AI Four Weeks Ago

Paid subscriber bonus: the Meeting Rhythm AI Integration Audit

Hi there,

Think about your meetings this week. The Monday standup. The project check-in. The one-on-one. How many of them mentioned AI? Not a dedicated AI session. A regular meeting. A passing reference. A question. Anything.

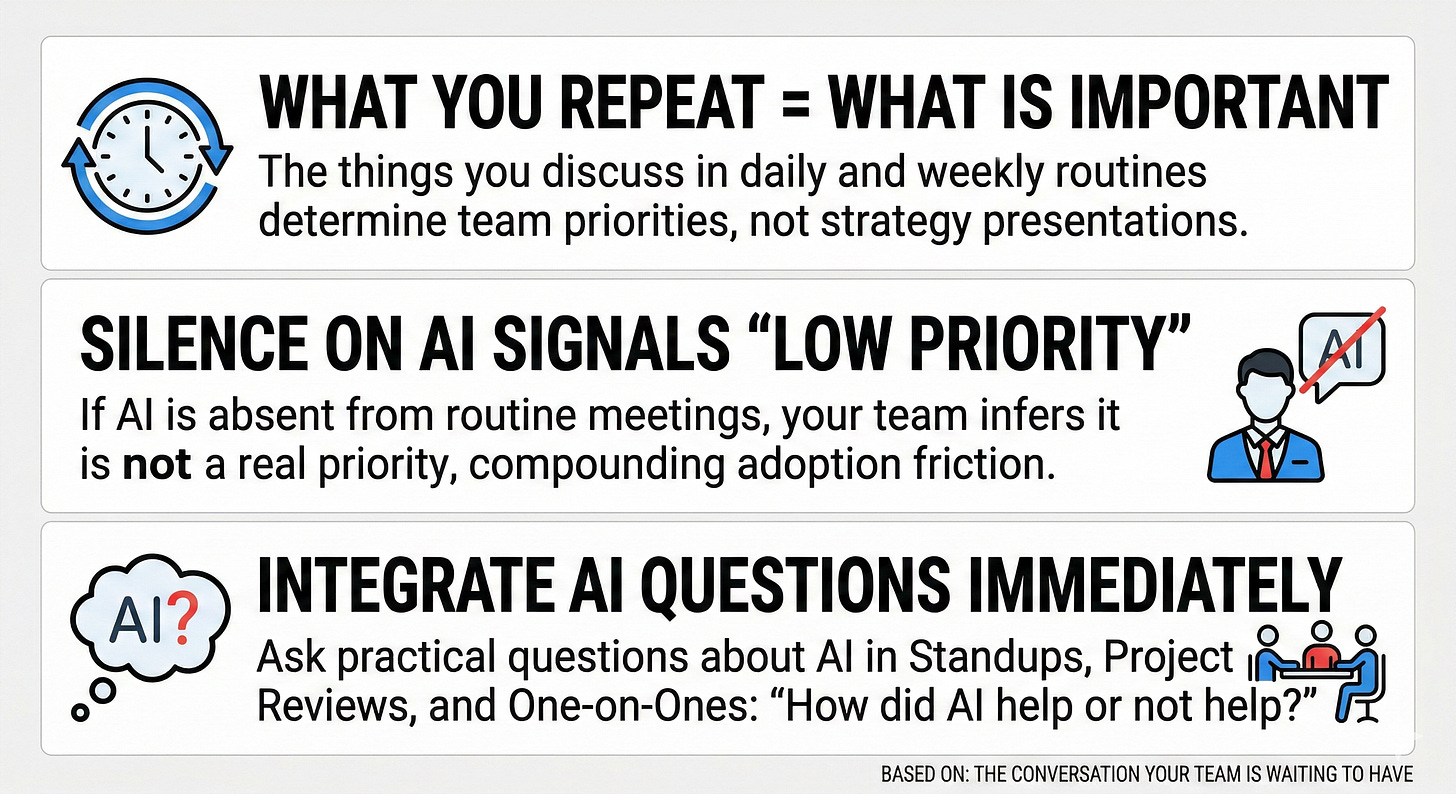

If the answer is zero, your team already knows where AI sits on the priority list. They did not need a memo. They inferred it from what survived contact with Monday. Silence was the message. And silence, in a team that watches everything the manager does, is louder than any strategy deck.

I have been writing about the friction points that block AI adoption and why leaders consistently start with the wrong one. This week is about the friction point you cannot see because it is not there. AI is absent from the conversations where adoption is actually decided, and that absence is doing more damage than most leaders realise, because it looks like nothing.

When I say “AI” here, I mean the practical stuff: the drafting, analysis, synthesis, and automation that either shows up in your Monday conversation or does not. Not a separate innovation programme. The work.

The signal you are sending every Monday

Teams take their cues from what the manager talks about in routine conversations. Not from the strategy presentation. Not from the training schedule. From what comes up on Monday.

A new initiative is announced. There is a launch. A training programme. An executive sponsor. The team hears the message: this matters. But that message has a half-life, and it decays with every routine meeting where the initiative is not mentioned.

In many technology change programmes I have worked on, the pattern is recognisable. Week one after launch, people mention the goal in meetings. Week two, fewer mentions. Week four, silence. Week eight, it is as if the initiative never happened. The half-life of initiative momentum, measured in meeting cycles. The meeting rhythm is the clock. And the clock runs whether the manager notices or not.

In my experience, the Monday meeting is where adoption lives or dies, not the quarterly review or the training session. The moment a manager does not mention AI in a routine meeting, the team receives a signal: this does not matter here. That signal compounds weekly.

This is not resistance from the team. It is local optimisation. They have limited bandwidth. They will focus on the things the manager asks about. If the manager asks about client deliverables, compliance deadlines, and project milestones but does not ask about AI, the team will prioritise client deliverables, compliance deadlines, and project milestones. The system is working as designed.

Two meetings to audit right now

You can test this in five minutes.

Take two meetings from the past week: a team standup or planning meeting and a project review. For each, answer two questions.

Was AI mentioned at all? Not a dedicated AI discussion. A mention. A reference. A question. Anything. If AI was not mentioned, the meeting told them what they needed to know.

Did anyone on the team raise an AI-related question, concern, or observation? If not, the meeting did not provide space for it. The team may have concerns but no forum to voice them.

If AI was absent from both meeting types, the meeting rhythm is actively working against adoption. Not passively. Actively.

If AI appeared in one but not the other, the coverage is inconsistent. The team gets a mixed message: AI matters when the manager remembers to mention it. That inconsistency reads as low priority.

The results will tell you more about your adoption trajectory than any dashboard. The dashboard measures what people did with the tools. The audit measures whether the organisational environment gives them a reason to.

Why the silence compounds

In my 5C Adoption Friction Model, adoption stalls when teams do not trust the priority (Credibility), do not feel agency (Control), or cannot see incentives (Consequences). The absence from routine meetings creates all three at once. The leader’s behaviour does not match the stated priority. The team has no forum to discuss AI on their terms. And nobody is addressing what happens if they move or if they wait.

The cost compounds in a specific way. Silence kills peer sharing, which kills experimentation, which kills the small wins that would generate more mentions. The loop closes on itself. And each rotation does not just add a week to the adoption timeline. It deepens the team’s conviction that AI is not a real priority.

After a month of that, reintroducing AI feels like a restart, not a continuation. The launch momentum is spent. And the first sign of trouble will not arrive as a question in a standup. It will arrive as a stalled metric in a quarterly review, months after the problem started.

This is another form of the misdiagnosis pattern. The leader sees adoption stalling and diagnoses a Capability gap. More training. A better tool. But the actual friction is structural. The meeting rhythm does not accommodate AI.

If you are not sure what signals your meetings are sending, that uncertainty is what the AI Change Leadership Intensive is designed for. $500, 90 minutes, and you will leave knowing which of your routines are driving adoption and which are quietly killing it. The Intensive includes a full refund guarantee if you do not leave with at least one actionable insight.

You have audited two meeting types. But there is a third conversation where your team would actually tell you the truth about AI. The one-on-one. That is where people say the things they will not say in a group: that they do not understand the tools, that AI is making their work slower, that they are worried about what this means for their role. The audit for the one-on-one, and the manager scripts for all three meeting types, are below.

Keep reading with a 7-day free trial

Subscribe to Getting AI To Work by Brennan McDonald to keep reading this post and get 7 days of free access to the full post archives.