The 5 hidden friction points blocking your team’s AI adoption

The AI adoption gap is increasingly a people problem, not a technology problem

Hi there,

I used to waste a lot of time with lots of different browser tabs open, trying to stay across everything. Now I just get custom briefings on whatever I am interested in, delivered on a schedule. When I need to go deeper on something, I go off and run a deep research tool to produce a detailed report, drawing on a wide variety of sources including non-English ones. It is a much more targeted use of time than spending hours browsing the internet.

What is happening here is not a productivity hack. It is a shift in how information moves. For most of my corporate career, work was push-based. Slack pings, email notifications, CC lists, forwarded articles, the endless stream of things-possibly-worth-reading. Personal agents invert this. You pull what matters when you want it, and you are interrupted only when something actually requires a decision or an action, and get pushed what you built for your own needs.

Even a year ago this was hard to set up. Now it much easier with tools like OpenClaw or Hermes Agent. But pretty much no one I know in corporate roles actually works this way yet. They are still drowning in the push stream, still manually copying and pasting across systems, still doing work an AI agent could have done better, faster and cheaper twelve months ago.

The reasons are not technical.

The gap is not where most people think it is

The standard narrative about corporate AI adoption is that companies are behind because the technology is moving too fast, or because they lack the right tooling, or because they have not figured out their data strategy. I do not think any of that is the real story.

The real story is that AI adoption is bottlenecked on people. Specifically, on how people think about technology, what they are economically free to say and do inside their organisations, and what reference points they have for what is actually possible. Many decision-makers in large companies are still forming their views of AI through locked-down, restrictive corporate deployments. Even when those tools are using current models, the experience is often several steps behind the frontier. Their frame of reference is limited.

This matters because the gap between what is possible right now and what most organisations are actually doing is enormous, and it widens every month. Meanwhile, mainstream media coverage still oscillates between bubble framing, labour panic, and tool-of-the-week commentary, which misses the operational change already underway.

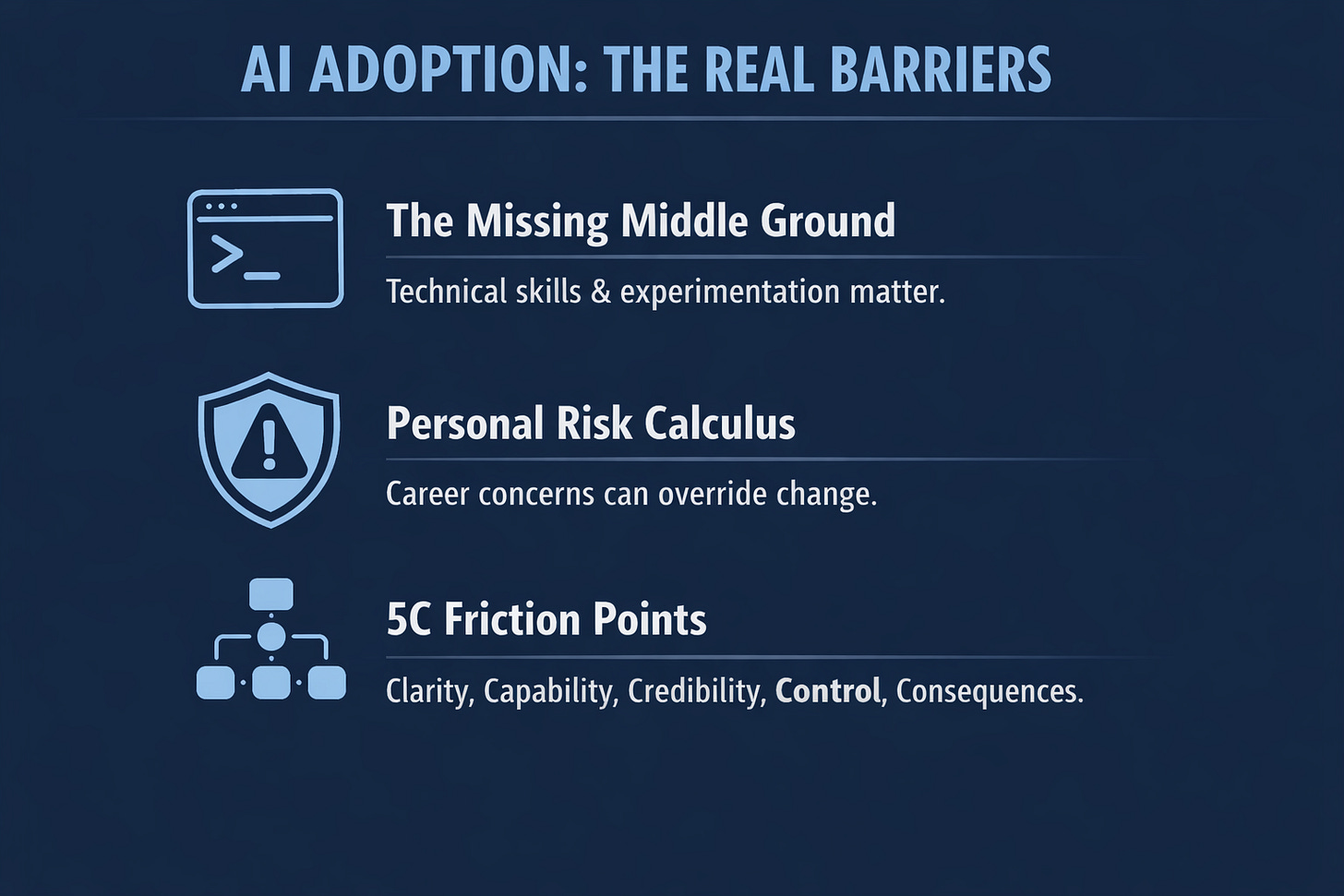

The symptom of all this is what I call cosmetic adoption: AI implemented visibly, but without changing how work is actually allocated, reviewed, staffed, or governed. Real adoption changes the work. Dashboards only record activity. The 5C Adoption Friction Model I have been developing on diagnoses why organisations end up here, and which of the five friction points, Clarity, Capability, Credibility, Control, or Consequences, is actually blocking progress in a given team.

The uncomfortable part is that many organisations stall not because nobody knows what to do, but because the people with enough authority to change things often have strong personal reasons not to take visible risks. There are three structural reasons the gap persists, and they compound.

Reason one: the technical middle ground almost everyone skips

One of the more persistent myths in the AI discourse is that you do not need to be technical to get value from these tools. The counter-myth, equally unhelpful, is that unless you can code professionally you are going to be left behind.

My take is more nuanced, and it matters for how you invest your time.

You do not need a software engineering degree to work effectively with AI tools. But you do need a specific set of working behaviours that most people in corporate roles have not built yet. You need to be able to inspect a failure when something goes wrong, read error messages and logs, understand what context the model has and does not have, break a task into testable steps, check outputs against reality, and recognise when a tool is producing plausible-looking output that is actually wrong. Terminal comfort is a useful proxy for most of these skills. A lot of them are easier to build if you are not afraid of a command prompt.

I know this because I lived it. When I was a student I ran a Linux laptop for a few years. That period built a comfort with the command line that I did not recognise as a strategic asset until much later. When I started using Claude Code, I was immediately comfortable experimenting with it. The learning curve was not steep for me because I had already paid the tax years before.

The people getting the most leverage out of AI tools today are not the non-technical people, whatever the “anyone can use it” marketing says. They are people who can form a hypothesis, run an experiment, read the output critically, debug when something goes wrong, and iterate quickly. That is a technical posture, even if it is not a software engineering posture.

If you do not have this posture, you hit a ceiling quickly. You will build a few impressive-looking demos, automate a couple of tasks, and then plateau. The compounding benefits go to people who can go deeper, and the gap between the two groups is widening every quarter.

The fastest way to build the middle-ground posture, realistically, is to experiment on your personal devices, in your personal time, on your own side projects. Corporate AI deployments are locked down for good reasons, but they give you a misleadingly limited frame of reference. You have to go wider on your own.

Reason two: the personal risk calculus behind corporate caution

This next point is the one I think is most under-discussed in the AI adoption conversation, and it needs to be raised carefully because it applies to a lot of folks.

A lot of what shows up as “we need to be careful about AI” or “let us wait and see” or “compliance will need to look at this” is not purely about risk management. It also reflects the personal risk calculus of the people making the calls. Senior corporate roles often come with asymmetric downside: the personal cost of backing the wrong transformation can be much higher than the personal reward for backing the right one early. Caution in that environment is not irrational. But some of what gets labelled corporate risk management is career-risk management wearing the same clothes.

Executives who are otherwise experienced and capable can find themselves more cautious about sponsoring uncertain work than their formal authority would suggest. When the personal cost of being wrong in public is high and the personal reward for being right early is modest, the rational move is often to wait for consensus.

This matters for AI adoption because substantive transformation requires sponsorship from people willing to advocate for work that might not succeed. That is a harder ask of anyone whose personal circumstances make public failure costly. I do not think this is a character failing. It is a structural feature of how senior corporate careers are constructed, and it is one of the quiet reasons progress tends to stall at visible, defensible activity rather than substantive change. The standard brakes on new work, cost discipline and compliance review, can then provide cover for inaction without anyone having to name what is actually going on.

The honest move is to separate two types of caution. Caution that reflects a considered view of the specific risks to your organisation is strategic, and it deserves respect. Caution that is more about protecting your own position is a different thing. Both are understandable. Only one is actually strategy, and the distinction is worth naming to yourself even if you never name it out loud.

Reason three: what actually blocks AI adoption

The 5C Adoption Friction Model I have been writing about in this newsletter identifies five friction points that block AI adoption inside real organisations. Each one is a question your people are silently asking:

Clarity: What am I supposed to do differently tomorrow?

Capability: Do I know how to do it?

Credibility: Has leadership actually changed how they work, or are they just endorsing?

Control: Do I have agency over how AI changes my role?

Consequences: What happens if I simply do not engage?

Until those questions have real answers, the initiative does not change how work gets done, no matter how much is spent on tools and training.

The order matters. If Clarity is missing, training will not help. If Credibility is missing, communications will not help. If people feel no Control, adoption stays performative. If there are no Consequences, the old operating model wins by default. Fix them out of order and you compound the problem. Fix them in order and each one makes the next easier.

The pattern I keep seeing is that leaders diagnose a Capability gap, prescribe training, and grow frustrated when nothing shifts. Wrong problem. Technical leaders default to capability because capability is what their role trains them to see. Communications leaders default to clarity. The actual blocker is usually Credibility, Control, or Consequences, and those are harder to address because they require leaders to examine their own behaviour rather than commission another programme.

When those friction points sit unaddressed, what you get is cosmetic adoption. The dashboards show usage. Training completion ticks up. The leadership team reviews the numbers and approves the next phase. Meanwhile, the actual deliverables look the same as they did six months ago. The organisation has spent real money to produce theatrical return, and worse, is about to scale the next phase on a foundation of compliance rather than change.

A lot of the so-called “AI layoffs” reported in the press fit this picture. When you look closely at what the company has actually done on AI, the answer is often “rolled out Copilot, integrated one SaaS tool, reduced headcount, attributed the change to AI.” Calling that an AI transformation is a stretch. It reads closer to a cost exercise presented through an AI lens.

The pressure that produces cosmetic adoption is real. Leaders are getting analyst questions on every earnings call. Institutional investors come in and ask what the AI strategy is, how costs are going to be cut, how margins are going to improve. Shareholders expect movement. Stakeholders expect direction. The path of least resistance is to produce visible motion without restructuring anything important.

Cosmetic adoption provides exactly enough cover to delay the real work, which is addressing the 5C friction points in the right sequence. Operating model compression, the idea I have written about before, where an organisation becomes systematically faster, better and cheaper because AI agents coordinate work that human middle managers used to coordinate, does not happen by accident. It requires sustained, uncomfortable effort from sponsors willing to restructure their own organisations and, in many cases, their own roles.

Most leaders know they need to move. Very few have worked through the 5C friction points in the right order. And cosmetic activity buys time without closing the distance.

The counterintuitive bit: large firms can win

The consensus narrative in tech is that AI-first startups will eat incumbents in every sector, the way cloud-native startups ate on-premises incumbents in the 2010s. Parts of this will be true. AI-first entrants will take products and services that incumbents thought were locked down, and they will do it faster than most incumbents realise.

But there is a counterintuitive flip side, and I think it is underappreciated.

Some very large companies are already doing an enormous amount of serious work in this space, leveraging the economies of scale and resources they have in ways a startup cannot match. If you are a truly large company and you commit properly to AI transformation, the return on being first in your sector with the best capability, the cleanest data, the most automated workflows and the deepest tooling leverage is much bigger than the equivalent return to a startup. You have more processes to compress, more data to clean, more workflows to automate, and more people to redeploy. The arithmetic is simply different at scale.

The competitive picture over the next few years will look less uniform than the “startups always win” framing suggests. The incumbents that get serious will pull further ahead. The incumbents that stay cosmetic will get eaten. The spread between these two groups inside the same industry will be wider than anything I have seen in my career.

For a concrete example of where this gets interesting, think about Claude’s new design capabilities, which compete directly with tools like Figma and Canva. The strategic question for a large company is not “which tool do we buy.” It is a question about operating model. If AI tools make acceptable first-pass design work available across the organisation, what should the design function actually become? A production queue? A standards body? A brand governance layer? A centre of excellence for high-value creative judgement? The head of design role does not disappear, but what it governs shifts from producing every asset to setting standards, protecting brand coherence, and deciding where human craft is still worth the cost.

These are not small questions. They affect headcount plans, budget allocations, career paths and, ultimately, cost structures. The companies asking these questions seriously right now will have a very different cost base in three years from the ones still debating whether to licence the enterprise version of a particular SaaS tool.

What this means for you, this week

If you are a senior person in a corporate role, there are three moves you can make before Friday.

First, build the technical middle ground. Not a computer science degree, but genuine comfort with the terminal, debugging instincts, and a personal reference point for what these tools can do at the frontier. You cannot build this through corporate deployments alone. You have to experiment on your own devices, on your own time, on your own side projects. This is part of the unpaid permanent part-time job we all now have, alongside staying healthy, exercising and sleeping properly. It is not optional if you want to remain competitive.

Second, run your own cosmetic adoption test. Open the last three deliverables your team produced. Not the dashboards, not the training completion metrics, the actual work. Can you see where AI changed the output, or was it used as an input and then smoothed back into a work product essentially identical to what the team would have produced six months ago? If the work looks the same, the adoption is cosmetic. That is your first honest number.

Third, ask one diagnostic question in your next one-on-one with someone on your team. Not “how is AI going.” Instead: “what would you do with AI if nothing was stopping you.” Whatever they say tells you which of the five friction points is live. A task they do not know how to approach points to Capability. A fear of doing something they are not supposed to do is Clarity or Control. A shrug and “no one really expects much” is Consequences. A comment about what leadership is or is not doing with AI points to Credibility.

Those three moves cost almost nothing and will tell you more about where your organisation actually is than another quarterly adoption report.

I keep developing the full diagnostic toolkit here. Paid subscribers get the 5C Decision Tree, the Compliance Theatre Diagnostic, and the playbooks for each of the five friction points. If you want to move from diagnosing to changing, that is where to go next.

Do you have a frame of reference for what is possible? Are you close enough to the frontier to make decisions that will still look sensible in twelve months? Most people cannot answer yes to these, and most organisations cannot either. That is the opportunity, and it is also the risk.

Not a bubble. A buildout.

The media’s default frame for AI is “bubble.” I do not think that fits what is actually happening.

The major AI labs are rate-limiting their users rather than cutting prices, which is what you do when demand is outrunning supply. Performance and latency are nowhere near what mature cloud services deliver. The hundreds of billions flowing into the underlying infrastructure each year do not go to an industry in terminal decline.

Bubbles do not look like this. Early-phase infrastructure buildouts do, where demand is real, supply is constrained, and the people paying closest attention are positioning themselves for what comes next.

The question is whether you are going to be one of them.

Best regards,

Brennan

Most teams are not blocked by tools, they are blocked by how work actually runs.