What I got wrong about AI adoption

Why AI initiatives stall inside real organisations

Hi there,

About fourteen months ago, I quit a corporate job. I’d spent over 12 years in financial services working in technology and change, delivering projects across Australia and New Zealand. I had a young family. It was a calculated bet, not a leap of faith.

This publication, Getting AI To Work, is about why AI initiatives stall inside real organisations, and what to do about it. That focus took me a while to find.

What pushed the decision was something specific. I had been experimenting with AI models in my own time, asking them questions about domains I knew well, areas where I had enough experience to judge the quality of the answer. The responses were so much better than anything a Google search could produce that it shocked me. I needed the space to dedicate real time to learning, experimenting, and building. That was not going to happen inside a corporate role.

So I left. And then I was sitting at a desk that was mine, in a house that was not quiet at all, writing into a newsletter that few at that point read.

The first few months were deliberate exploration. I wrote about vibe coding, about Gemini, about Studio Ghibli, about whatever caught my attention that week. If you go back through the archive, you can watch me think out loud. Some of those posts hold up. Some don’t. That is what exploration looks like when you are doing it in public.

What I did not expect was how quickly my technical range changed. I’ve always been reasonably technical: some SQL, some Python, comfortable with the command line. But I was not a developer. And there was no pathway to experiment with this technology at work.

Since leaving, I have been building with AI coding tools almost every day. An AML risk assessment readiness tool for Australian businesses. An analytics platform that automates analysis of complex regulatory disclosures for clearinghouses. Several data and analytics tools to support my research.

None of these existed fourteen months ago, and I did not have the technical ability to build them. That direct, practical understanding of what AI tools can do informs everything I write about adoption. The rate of capability improvement still astonishes me.

By mid-2025, I had landed on a set of big frameworks. Operating Model Compression. The Liquid Organisation. The Triple Boundary Framework. I thought that if I could describe the structural forces clearly enough, the right people would find it useful. And many did.

But something was off.

What I got wrong

The frameworks described the world. They did not tell anyone what to do next.

I was writing for leaders who had already decided AI mattered. They did not need another explanation of why the world was changing. They needed a way to figure out why their specific initiative was stuck, and what to do about it on Monday morning.

The technology was moving so fast that the bottleneck had shifted. By mid-2025, the tools were good enough. The models were good enough. The constraint was no longer technical. It was organisational. Bureaucracy, governance theatre, change management failures, the politics of getting people to do something different when they are not sure it is safe to try. That was the real constraint. And my frameworks were not addressing it.

I did not have a single moment of revelation. It accumulated. The posts that got traction were the practical ones. The posts that got silence were the theoretical ones. The readers who reached out were not asking “What does the future look like?” They were asking “My team is not using the tools and I do not know why.”

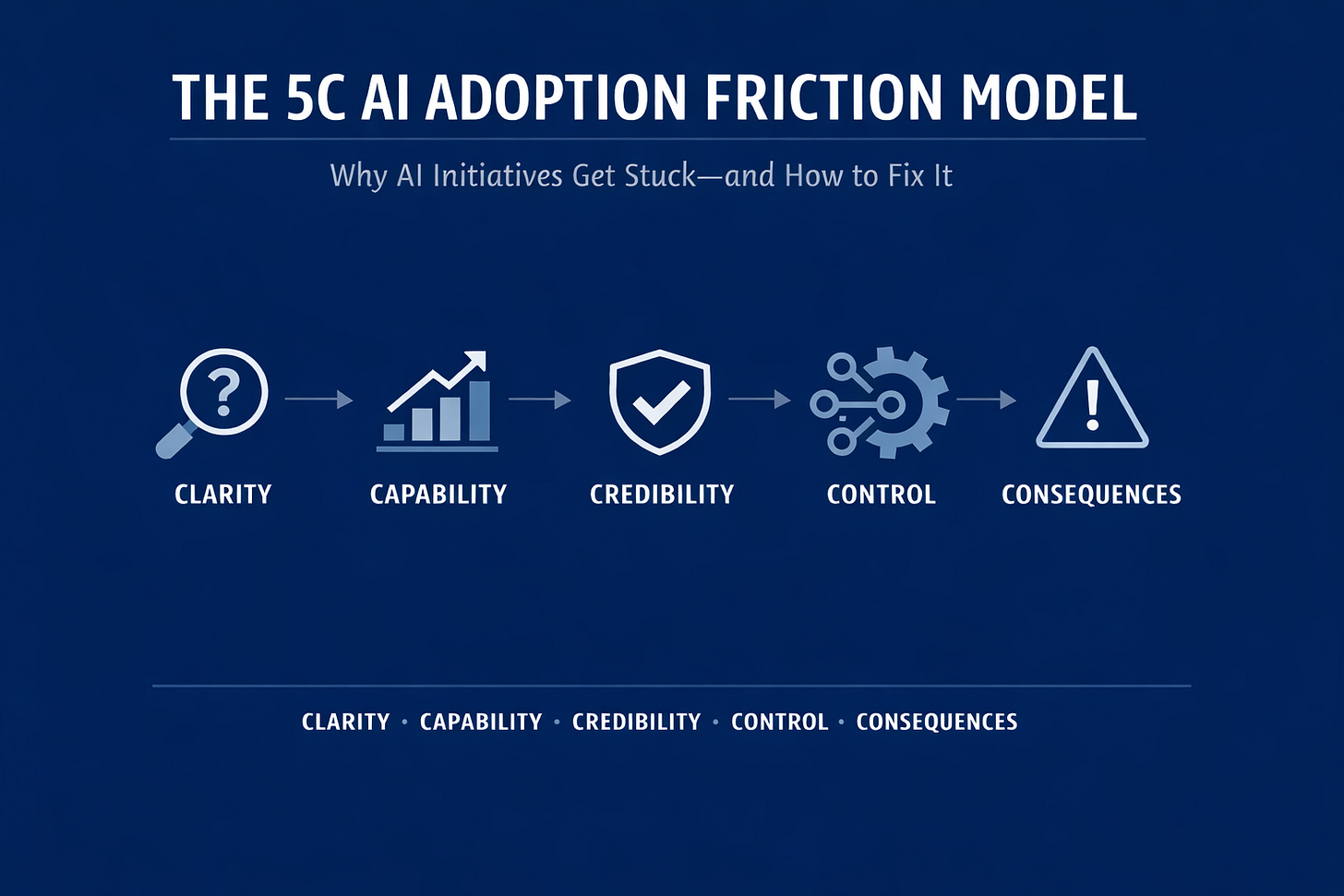

That question became the 5C Adoption Friction Model, which became the foundation for everything I have written since December.

What this publication is now

If you are new here, particularly if you have come across from Global Custody Pro, here is where things stand.

The core of the publication right now is a diagnostic series on AI adoption friction. Each article names a specific friction point that blocks AI adoption and walks you through how to identify it, why it persists, and what to do about it. But I also write about whatever else I am thinking about, and that will not change.

The underlying framework is the 5C model: Clarity, Capability, Credibility, Control, Consequences. Five friction points. A specific sequence. Fix them out of order and you compound the problems. Fix them in order and each solution makes the next one easier.

For those coming from Global Custody Pro: this is different content. Global Custody Pro focused on AI transformation in custody and post-trade operations. This publication is industry-neutral.

The patterns I write about here show up in financial services, yes, but they also show up in healthcare, in professional services, in government, in any organisation where AI has been introduced but not adopted. The friction is human, not technical. The industry is incidental.

Every article stands on its own, and the foundational article that introduces the full framework is here. Start there if you want the complete picture, or start with whichever title sounds like your problem.

What paid subscribers receive

Each article includes a diagnostic tool for paid subscribers: conversation scripts, audit frameworks, action plans, checklists. They are practical tools designed to be used in a real conversation with a real team, not filed away. The library grows with each article, and paid subscribers have access to the full archive.

Start here

AI Adoption Is Stalling. More Training Won’t Fix It. introduces the full 5C model. Every article since sits on top of it.

How to Unblock Your AI Roadmap in One Conversation gives you a single conversation to figure out what is blocking your team.

Operating Model Compression: A 2025 AI Year in Review is the bridge between the earlier framework writing and the diagnostic series.

Why Smart Leaders Are Still Hesitant About AI names the patterns behind executive hesitation that most commentary misses.

If you follow the Substack Notes or my other social media channels, you already know I think out loud there regularly. I am going to start doing more of that in the newsletter itself. Alongside the main articles, I will be publishing a shorter weekly notes post: what I am reading, what I am building, observations that do not fit neatly into a diagnostic framework but are worth sharing. The kind of writing that got me started in the first place.

If you have been reading along, thank you. If you are new, welcome.

I would like to hear where your own team is getting stuck right now: Clarity, Capability, Credibility, Control, or Consequences?

Hit reply to this email or send me a DM.

Normal service resumes on Wednesday.

Best regards,

Brennan