Six Months Into Your AI Journey. Nothing Has Changed.

It hasn't been cancelled, but it hasn't succeeded either. 3 data points to check today.

Hi there,

Start here if you are new to this series: Your AI Training Isn’t Working. Here’s Why.

The Quiet Failure Mode Nobody Talks About

Last week’s essay explored why leaders avoid the hard question about their AI initiative: is it actually changing how work gets done? This week asks what happens when they keep avoiding it.

There is a kind of AI initiative that is harder to spot than the ones that fail visibly. It has not been cancelled. It has not been declared a success. It is still running. The conversations have not changed since month two. The pilot has not moved past pilot.

Nothing has visibly broken. Nothing has visibly worked either.

This is the quiet failure mode. Far more common than spectacular failure, and far more costly, because it consumes time and goodwill without showing that anything needs to change.

The pattern is recognisable. Six months of invisible stagnation, followed by a crisis that should have been a conversation three months earlier. The 5C Adoption Friction Model maps the forces that cause this. Stagnation is what happens when those forces sit ignored long enough that the initiative stops generating friction at all.

The First Three Months

In month one, there was energy. You ran a launch meeting. You explained the why. You announced the first pilots. You took questions. You watched people’s faces. Some excited. Some sceptical. Most paying attention. The conversation was forward-looking.

By month three, the conversations were widening. New roles were asking questions. Integration challenges were surfacing. Real friction was appearing, the kind that comes from people actually trying to use the tools in their work. It was messy, but it was movement.

What Month Six Looks Like

If progress is real, month six looks untidy. New people have entered the conversation. The regular check-ins are asking different questions than they asked in month two. The budget has shifted, because the reality changed. People are finding edge cases nobody anticipated and using the system in ways the designers did not expect.

When stagnation has set in, the opposite is true. In an exploratory programme, too much stability is usually not a sign of control. It is a sign that nothing is moving.

Same people, same questions, same tools, same budget, same answers.

Nothing in the operating reality has forced adaptation. The budget, line for line, matches the original plan, which in exploratory work usually means no one learned anything worth adjusting for. The energy is gone. Not the dramatic kind of failure that forces a decision. The kind that fades quietly until nobody believes it will actually work.

The Three Data Points

These do not require a consultant. They require a calendar, a set of meeting notes, and ten minutes.

Data Point 1: How many new names are in the room? If the same small group is still doing the work in month six, adoption has stagnated. You cannot scale something that is not moving. Check your meeting attendance. Check your channel membership. Count the names that were not there in month one.

Data Point 2: What new friction has shown up? Friction is how you know people are actually using something. No new friction in month six that you did not have in month two means no one is using this enough to find new edge cases. Look at your support tickets, your feedback channels, your integration logs.

Data Point 3: What are your regular check-ins asking? A working initiative asks different questions in month six than in month two. If the conversation has not changed, someone is not learning. Pull last month’s agenda next to the one from month two. Compare.

Check your calendar. Check your notes. Write the numbers down. This is your diagnostic.

The Cost of Waiting

By month three, stagnation is recoverable. The team has not yet burned out. A pivot looks like learning.

By month six, the cost rises. People have invested time and attention. Some have made bets on this direction. A pivot now means admitting those early months did not lead where they should have. The people who believed in the original direction will need a reason to believe in the new one.

By month nine, the cost is very high. The initiative has history. Stakeholders have positions. Some people have been promoted because they “led the AI transformation.” The change is no longer a learning conversation. It is political.

By month twelve, you are in crisis mode. “If we had made this call in month three, it would have been a course correction. If we had made it in month six, it would have required a difficult conversation. In month twelve, it requires an apology.”

If you are between month six and month nine, the decision window is narrowing.

The Decision That Changes the Trajectory

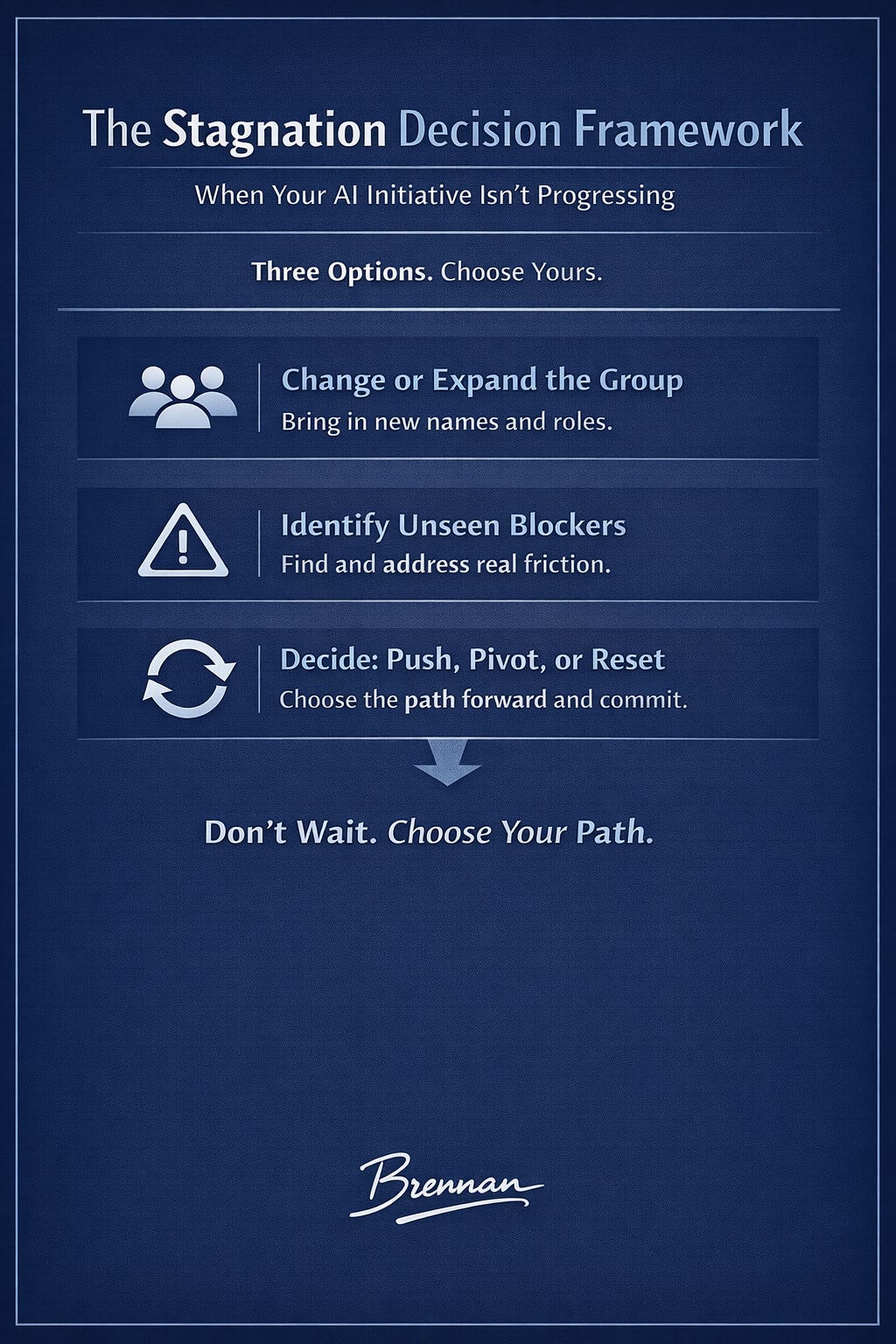

If those three data points confirmed what you suspected, the next question is harder. Push harder? Change the approach? Or acknowledge that six months of effort has not produced the progress it was supposed to?

This is uncomfortable. The sunk cost is real. The stakeholder expectations are real. But the cost of delay is also real. Every week the decision waits, the initiative sinks deeper. Not into success. Into habit. Into expectation. Into the calendar.

You now have the diagnosis. The decision comes next: push forward, pivot, or reset.