A Leadership Guide to Fixing AI Adoption at Scale

The failure is visible early. Few organisations recognise it.

Hi there,

Start here if you are new to this series: Your AI Training Isn’t Working. Here’s Why.

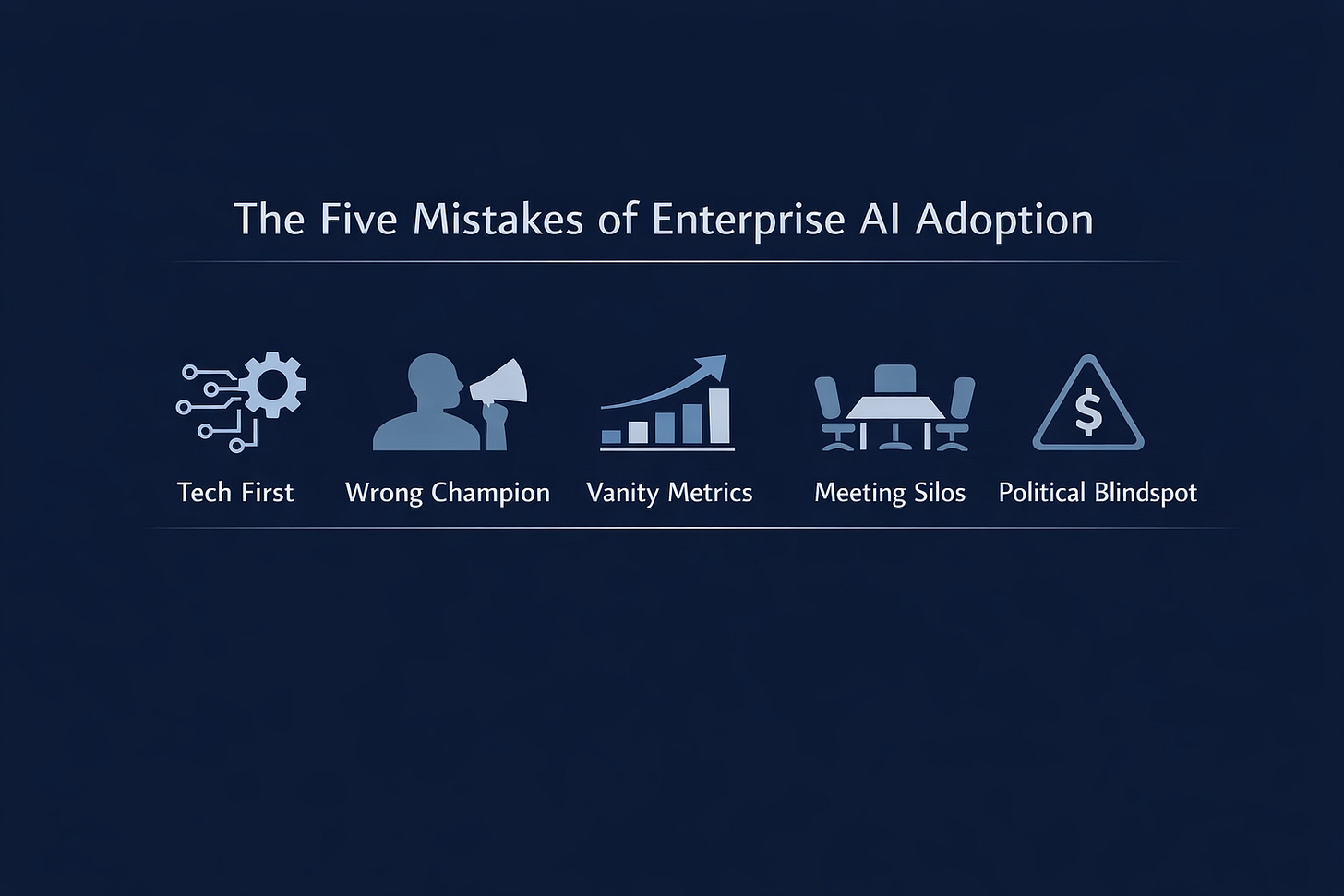

Enterprise AI adoption stalls in five predictable places. The most expensive of them is not the one most leaders watch for. It is about meetings. Which ones AI reaches, and which ones it does not.

None of these are failures of competence. The default pathways in organisations push toward these exact choices. That is what makes them worth examining: they are structural failures, and structures have authors.

Most leaders are not making all five. But the one that is active is usually costing more than it appears, and the next one in the sequence is often close behind.

Mistake One: Treating Adoption as a Technology Problem

Leaders invest in tools first and ask why the adoption is stalling later. They buy enterprise AI platforms. They build security architecture. They negotiate vendor agreements. And then they watch the adoption curve flatten after three months.

This happens because they have solved the wrong problem.

The friction in enterprise AI adoption is rarely a technology problem, at least not by the time it shows up. Once the platform has been bought, approved, and deployed, the binding constraint shifts from the model to belief, permission, trust, workflow, and incentives. The blockage has moved. Most leaders have not. People do not understand why they should change. Or they understand it and they do not trust it. Or they trust it and they do not have permission to use it. Or they have permission and they do not know how.

Better diagnostic questions start with the people. Who needs to believe this is valuable? Which conversation needs to happen before the technology matters? What would make a sceptical person in this room less sceptical? The work is there, in conversation rather than in procurement.

You end up with a fully deployed technology that no one actually uses, and the erosion of trust that comes from investing in tools no one wanted.

Mistake Two: Choosing the Wrong Champion

The second mistake follows quickly from the first. You need a champion to drive adoption. That is correct. But most leaders choose the wrong person.

They choose the enthusiast. The person who got excited about AI before anyone else. The person who already uses it in their inbox, who has seen the value, who wants to talk about it constantly. They think: this person will convince everyone else.

What actually happens is the opposite.

The enthusiast raises fear in the room, not interest. Because the enthusiast is not like the rest of the team. The enthusiast absorbs the learning curve more easily, bears less reputational risk in being early, and is not representative of the median employee. The rest of the team sees someone whose skills and confidence are already high and thinks: maybe I need to be someone else to use this.

The person you actually need is the trusted sceptic. The person everyone respects. The person who does their job well without AI and has asked hard questions about why this matters. When that person says, “I tried it. I was wrong about this,” other people listen. When the enthusiast says it, the room assumes the enthusiast is selling something.

The cost of choosing the enthusiast: you alienate the very people whose adoption would prove the technology works. You turn it into a special interest instead of a normal tool.

Mistakes one and two stall adoption. Three, four, and five teach the organisation to hide that it is stalling.

Paid subscribers get mistakes three, four, and five, the compounding effect, the full Recovery Protocol map, and the attached protocol document with the three specific actions for each mistake. Subscribe.

Keep reading with a 7-day free trial

Subscribe to Getting AI To Work by Brennan McDonald to keep reading this post and get 7 days of free access to the full post archives.